Overview

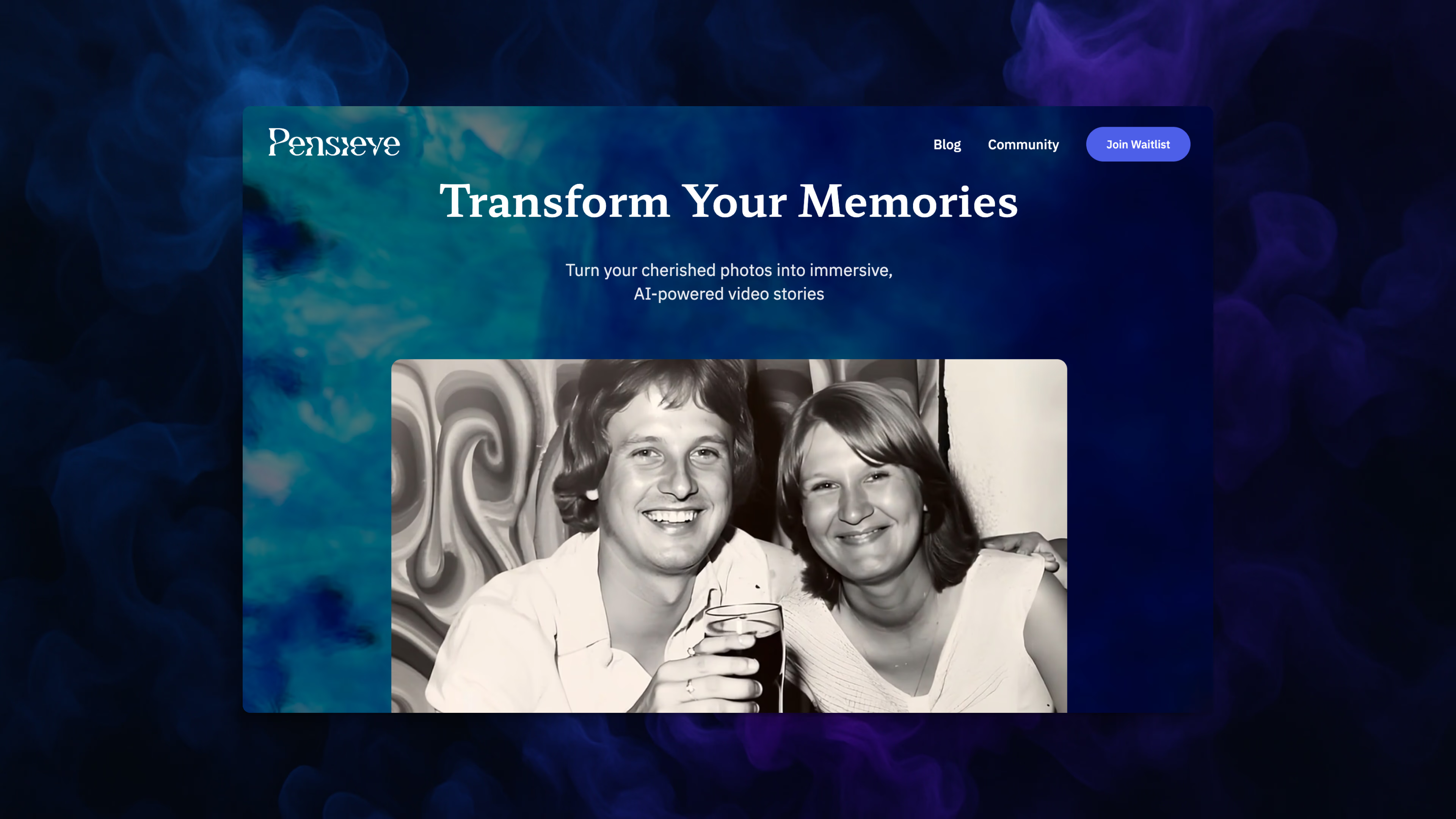

Pensieve is a platform that transforms static 2D moments into emotive, animated, spatial memories. Rather than treating memories as recordings to be corrected, Pensieve honours how human memory actually works — fluid, selective, and emotional. Users start with any image or video, no matter the format or quality, and Pensieve reconstructs it into something you can feel again.

The GenAI Studio

At the heart of Pensieve is a custom-built generative AI studio that gives users creative control over how a memory is reconstructed.

- Upscale and restore low-resolution or damaged images — scanned photos, Polaroids, digital snapshots

- Subtle animation — eye-blinks, light breeze, small gestures — to gently awaken a still moment

- Ambient soundscape generation that captures the feel of the memory, not just background noise

- Intentional stylisation, leaning into the soft, surreal qualities of recollection

2D to Spatial Video

The hardest technical challenge in Pensieve is the one the user never sees: converting a flat 2D video into a true spatial video, ready for playback on Apple Vision Pro and Meta Quest. A single tap triggers a multi-stage processing pipeline that bridges the gap between standard video and immersive 3D.

- AI depth estimation — the IW3 model analyses each frame to generate a per-pixel depth map from a single monocular input, inferring spatial structure where none exists

- Stereo synthesis — the depth map is used to synthesise a second viewpoint, producing a side-by-side stereo video that simulates binocular vision

- MV-HEVC encoding — the stereo pair is encoded as a multiview video using MV-HEVC (x265), the codec standard required for spatial video on both Apple and Meta hardware

- Spatial packaging — the encoded stream is muxed into a QuickTime container with the precise metadata tags that Apple Vision Pro and Meta Quest use to recognise and render spatial video

- Toolchain orchestration — FFmpeg, MP4Box, Bento4, and x265 are coordinated in a precise sequence, each handling a different stage of the transcode and packaging pipeline

Architecture

The Pensieve API is fully serverless, deployed to AWS using SST. The processing pipeline runs inside a container on Lambda, keeping baseline costs near zero while scaling automatically with demand.

- FastAPI application on Lambda for task management, with asynchronous processing via a containerised Lambda function

- S3 for input and output video storage, DynamoDB for task state, API Gateway for routing

- Heavy video processing dependencies — FFmpeg, x265, MP4Box, Bento4, IW3 — packaged into a single container image

- Infrastructure-as-code via SST with per-stage deployment (dev, prod) and API key protection

Design Principles

- Memory is emotional, not accurate — optimised for tone and feeling, not photorealism

- Immersion should be gentle — no gimmicks or artificial effects, just atmosphere

- Technology should feel invisible — users engage with memories, not mechanics

Status

Active development.